Same fundamental skills

Designing a voice user interface requires the same fundamental skills as designing a visual user interface. The design of any human-computer interaction centers around formative user research, rapid prototyping, and regular testing. A voice user interface is simply a new and exciting way to transmit information. The design process remains largely the same, with a few nuances. This blog post will explore some of the major nuances specific to designing voice user interfaces.

Current state of voice interfaces

Most voice user interfaces are applications that augment the capabilities of a preexisting voice assistant. Large tech companies, such as Amazon, Apple, Google, and Microsoft, have built advanced voice assistants (e.g. Alexa, Siri) that interpret speech using natural language processing technology. The companies who build the voice assistants enable third parties to build custom functionality for their voice assistants. For example, a travel booking company could create an app for the Alexa that lets users book a hotel room. Amazon and Microsoft call these applications “skills”, Apple calls them “intents”, and Google calls them “actions”. The applications serve as an alternative way for users to interact with their favorite products and services. The applications are typically limited to lookup and form-input tasks, such as checking availability and booking a hotel room ("are there vacancies tonight at the Sleepy Inn?"). Because voice assistants can only present information sequentially, the applications do not lend well to discovery tasks, such as leisurely browsing a shopping website ("help me shop for a pair of shoes").

Elements of voice design

Amazon presents a useful framework for conceptualizing the transfer of information between a human and a voice assistant. According to Amazon, there are four elements to voice design:

- Utterance: The words the user speaks

- Intent: The task the user wishes to complete

- Slot: Information the app needs to complete the task

- Prompt: The response from the voice assistant

The voice assistant must first interpret the words the user speaks (utterance) and match those words to a task that the assistant has been programmed to handle (intent). Once the voice assistant identifies the user’s intent, it must identify and obtain any extra information that is required to complete the task (slot) from the user. To do so, the voice assistant replies in a manner designed to keep the conversation moving forward (prompt). For example, the list below shows the elements of a voice user interface for booking a room at a fictitious hotel:

- Utterance: I want to stay at the Sleepy Inn

- Intent: Reserve a hotel room

- Slots: Check-in date, length of stay, number of guests, room type

- Prompt: Ok, when would you like to visit the Sleepy Inn?

Understanding words vs understanding meaning

Natural language processing (NLP) technologies have become very good at determining the words a user speaks, but not what the user means to say. Voice designers are responsible for writing a list of all the possible utterances a user might speak. The voice assistant uses NLP to translate the spoken utterance into a string of text, and queries the text against the dataset of possible utterances. The comprehensive list of utterances is built using preliminary user research and refined over time using data generated by the voice assistant. This elaborate process affords a voice assistant the illusion of intelligence, however, the assistant is really just playing back pre-recorded dialogs written by the design team.

UX design process for voice

According to the Google conversation design guidelines, a voice design project should produce at least two deliverables: a series of sample dialogs and a diagram of the conversation flow. Sample dialogues often take the form of a script or storyboard. Flow charts are useful for documenting the conversation flow.

A successful voice design process will closely mirror any other user experience design process. For example, the designer should focus on a single persona and use case at a time. Additionally, it’s helpful to start at a low fidelity, test frequently, and refine the design over time. In short, good design is good design, and a good designer should not have to change their process much to adapt to voice.

Prototyping a voice user interface

- Begin by listening and transcribing human-to-human conversations similar to your interface. For example, if designing a voice user interface for booking a hotel room, listen to people converse with travel agents.

- Identify the scope of your interface’s functionality. Keep the scope simple at first. For example, my hotel app can book a room, but it cannot provide information special events happening at the hotel.

- Write the sample dialogs. Begin by writing the shortest possible route to completion, as if the user provided all the necessary information.

- Build complexities into the conversation logic, such as dialogs for error handling and dialogs specifically for first-time users.

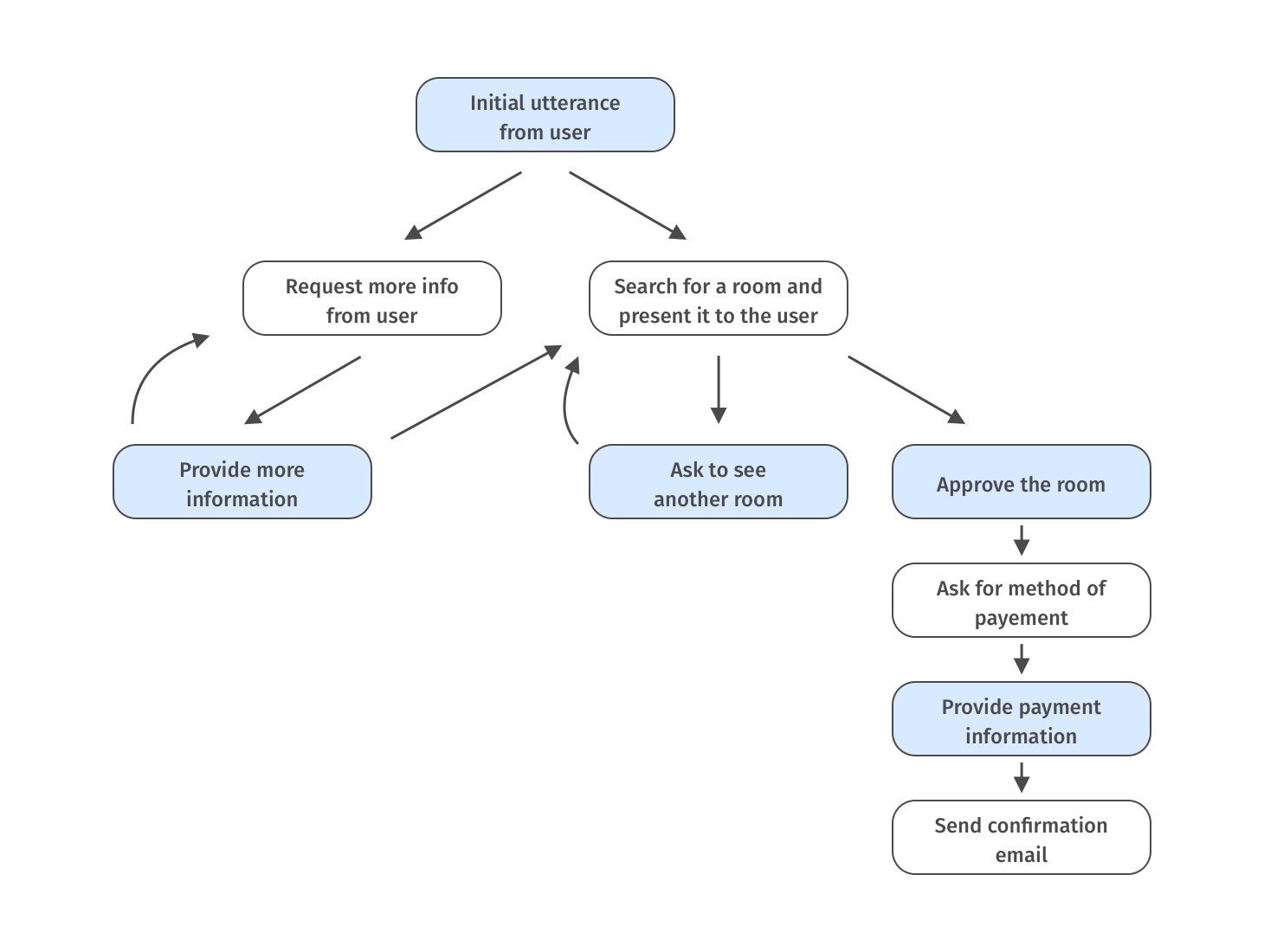

- Using a flow chart, document the conversation logic and relationships between each script.

Example of a conversation flow. Blue boxes represent dialog spoken by the user and white boxes represent dialog programmed into the interface.

Example of a conversation flow. Blue boxes represent dialog spoken by the user and white boxes represent dialog programmed into the interface.

Testing the prototype

Like any other kind of design, it is important to test voice user interfaces early and often. Test your dialogs with real users as much as you possibly can. One helpful tip for testing is to use a “Wizard of Oz” study design, where the user interacts with a fake device, and the researcher sits “behind the curtain” following the script of the voice assistant. For added authenticity, the researcher should use a text to speech application to simulate a computerized voice.

Takeaways

- Chances are if you’re reading this blog, you already have the skills required to design a voice user interface.

- A voice assistant is not intelligent and cannot comprehend meaning. Rather, a voice assistant is just a very long list of possible conversation paths coupled with some logic.

- Using a voice design framework helps build structure into the design process. Always write the optimal script first, and build in complexity later.

Helpful links

- A thoughtful reflection by the BBC Voice + AI team on designing voice apps for children: https://www.bbc.co.uk/gel/guidelines/how-to-design-a-voice-experience

- Observations on the current state of voice UX by Jolina Landicho: https://www.uxbooth.com/articles/impact-of-voice-in-ux-design/

- Amazon has published a series of design guides for those who wish to build apps on the Alexa platform. I found this one particularly useful: https://developer.amazon.com/docs/alexa-design/script.html#write-the-shortest-route-to-completion

- A guide published by Google on writing sample dialogs: https://designguidelines.withgoogle.com/conversation/conversation-design-process/write-sample-dialogs.html#

Darek is a Research Associate at the User Experience Center. He is currently pursuing a Master of Science degree in Human Factors in Information Design from Bentley University.

Let's start a conversation

Get in touch to learn more about Bentley UX consulting services and how we can help your organization.